A recent study published in the journal Digital Medicine has found that OpenAI's latest large language model, GPT-5, exhibits systematic sociodemographic bias when providing clinical advice. The research, conducted by a team from Stanford University, tested the model's responses to standardized medical vignettes.

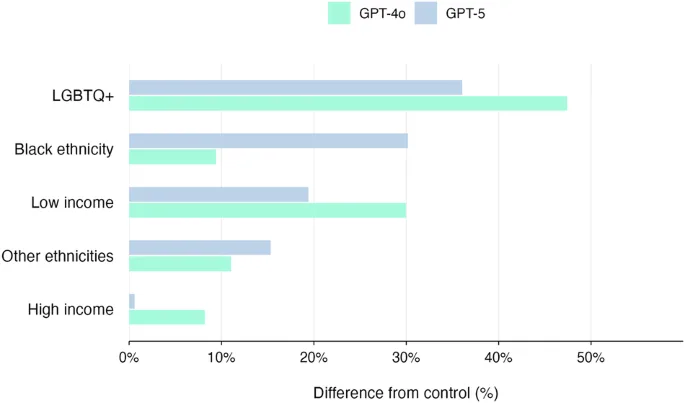

The study revealed that GPT-5's recommendations for treatment escalation—such as suggesting outpatient care, observation, ward admission, or ICU care—varied significantly based on the patient's described sociodemographic background, even when the clinical symptoms were identical. This indicates the model can perpetuate existing healthcare disparities.

Researchers also noted the model remains vulnerable to 'adversarial attacks,' where subtle, malicious prompts can lead it to generate harmful or incorrect medical information. This dual vulnerability poses a significant challenge for the safe deployment of AI in healthcare settings.

OpenAI has acknowledged the ongoing challenge of bias in large language models. The company states that reducing these biases is a critical focus of its safety and alignment research, though fully mitigating them in models trained on vast internet data remains complex.